Deep Joint-semantics Reconstructing Hashing For Large-scale Unsupervised Cross-modal Retrieval

Su Shupeng, Zhong, Zhang. Arxiv 2024

[Paper]

[Code]

ARXIV

Cross Modal

Has Code

Unsupervised

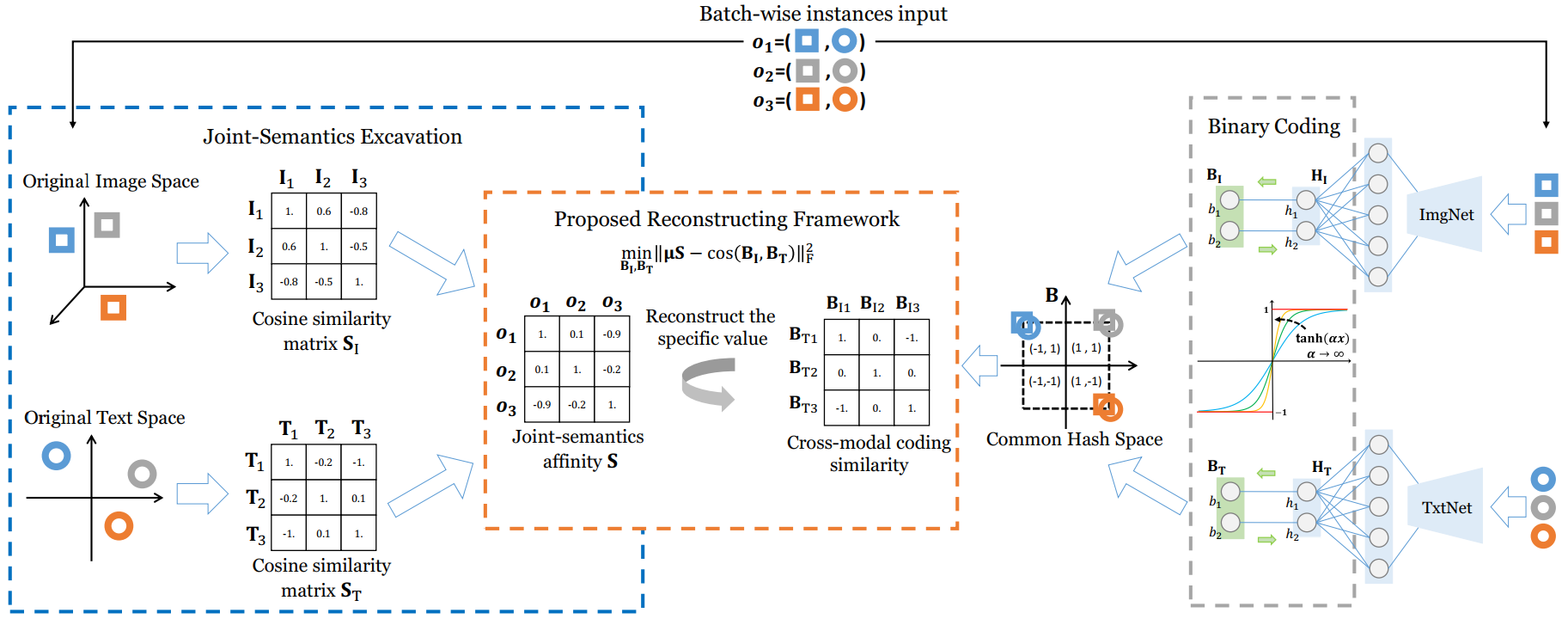

Cross-modal hashing encodes the multimedia data into a common binary hash space in which the correlations among the samples from different modalities can be effectively measured. Deep cross-modal hashing further improves the retrieval performance as the deep neural networks can generate more semantic relevant features and hash codes. In this paper, we study the unsupervised deep cross-modal hash coding and propose Deep Joint Semantics Reconstructing Hashing (DJSRH), which has the following two main advantages. First, to learn binary codes that preserve the neighborhood structure of the original data, DJSRH constructs a novel joint-semantics affinity matrix which elaborately integrates the original neighborhood information from different modalities and accordingly is capable to capture the latent intrinsic semantic affinity for the input multi-modal instances. Second, DJSRH later trains the networks to generate binary codes that maximally reconstruct above joint-semantics relations via the proposed reconstructing framework, which is more competent for the batch-wise training as it reconstructs the specific similarity value unlike the common Laplacian constraint merely preserving the similarity order. Extensive experiments demonstrate the significant improvement by DJSRH in various cross-modal retrieval tasks.

Similar Work

- Unsupervised Hashing With Contrastive Information Bottleneck

- High-order Nonlocal Hashing For Unsupervised Cross-modal Retrieval

- Joint-modal Distribution-based Similarity Hashing For Large-scale Unsupervised Deep Cross-modal Retrieval

- Efficient Cross-modal Retrieval Via Deep Binary Hashing And Quantization